iWorld

Meta deploys AI tools to detect under-13 users on its platforms

Photo and behaviour analysis to flag accounts, teen safeguards expand globally.

MUMBAI: No more guessing games, Meta is teaching its platforms to spot age before users say it. Meta is rolling out artificial intelligence tools that analyse photos, videos and user behaviour to identify whether individuals are under the age of 13, tightening enforcement of age restrictions across platforms including Facebook and Instagram. The system evaluates visual cues such as height and bone structure not through facial recognition, Meta says, but by interpreting broader visual patterns. These signals are then combined with contextual data from user activity, including posts, captions, comments and interaction patterns, to build a more accurate age estimate.

The approach goes beyond what users declare. References to birthdays, school grades or age-linked milestones are also factored in, allowing the system to scan entire profiles for inconsistencies that may indicate underage use.

If flagged, accounts can be deactivated, with users required to complete an age verification process to regain access. The technology is already live in select markets, with a wider global rollout underway. Meta also plans to extend these checks to features such as Instagram Live and Facebook Groups.

The move comes amid mounting regulatory pressure. A jury in New Mexico recently ordered Meta to pay $375 million in civil penalties over allegations linked to platform safety and risks to minors, while directing structural changes adding urgency to the company’s child-safety push.

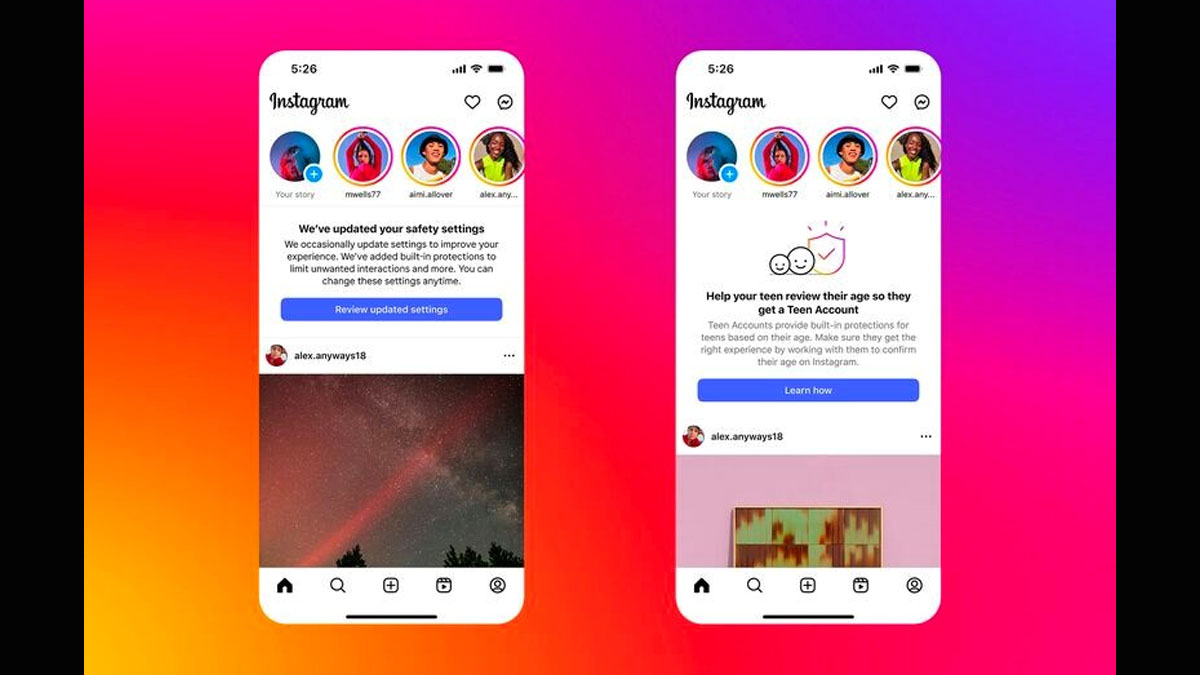

Alongside detection, Meta is also tightening protections. Its “Teen Accounts” feature on Instagram is now expanding to 27 countries across the European Union and Brazil, introducing stricter defaults such as private profiles, limited direct messaging to known contacts and filters for harmful content. Similar safeguards are set to roll out on Facebook in the United States, with further expansion planned in the UK and EU in June.

Together, the updates signal a shift from reactive moderation to proactive policing, where platforms are not just hosting users, but actively determining who should be there in the first place.