Regulators

TRAI hosts ‘Responsible AI in Telecom’ session at India AI Summit

Chair Anil Kumar Lahoti stresses trust and guardrails as AI integrates into networks on 20 Feb 2026.

MUMBAI: AI in telecom isn’t just calling the shots anymore, it’s running the whole network show, and TRAI wants to make sure the director stays human. The Telecom Regulatory Authority of India (TRAI) convened a dedicated session on “Responsible AI in Telecom” at the India AI Impact Summit 2026, held on 20 February at Sushma Swaraj Bhawan in New Delhi. The gathering drew senior executives from telecom operators, global tech giants like Ericsson, Qualcomm, and Nokia, industry bodies such as GSMA, government arms including DoT and C-DOT, plus international stakeholders for frank talks on weaving AI responsibly into networks and customer-facing services.

TRAI chairman Anil Kumar Lahoti kicked off proceedings with a clear message, “Artificial Intelligence is no longer a peripheral technology for telecom, it is becoming integral to how networks are designed, managed and experienced.” He stressed that as AI influences decisions at population scale, optimising 5G performance, predicting faults, slashing energy use, boosting customer experience, and cracking down on spam trust must be the cornerstone. Efficiency gains, he said, need transparency, accountability, human oversight, and firm guardrails to guarantee fairness, unbiased results, resilience, security, and public good.

Lahoti highlighted telecom’s role as India’s AI backbone, given the massive subscriber base, making AI-driven automation essential. He pointed to ongoing work like strengthened spam enforcement, AI filtering, and digital consent frameworks for verifiable commercial messaging. TRAI’s approach remains risk-based, favouring regulatory sandboxes to foster innovation while protecting consumers.

Two punchy panel discussions followed. “Preparing Telecom Networks for AI Era,” chaired by TRAI Member Ritu Ranjan Mittar, featured Ericsson CTO Magnus Ewerbring, Qualcomm VP Vinesh Sukumar, Nokia SVP Pasi Toivanen, and Tejas Sr VP Shantigram Jagannath. They unpacked AI adoption in networks, transparency in explainable systems, responsibility-by-design, environmental sustainability, security, and AI-native architectures reshaping 5G management.

The second panel, “Building Customer Trust through AI-driven Operations,” led by TRAI Member Dr M P Tangirala, included GSMA APAC head Julian Gorman, C-DOT CEO Rajkumar Upadhyay, Vodafone India CTSO Mathan Babu Kasilingam, and DoT TEC Sr DDG Syed Tausif Abbas. Topics ranged from accountability in automated decisions, transparency in customer engagement, ethical frameworks for spam prevention, and standards for an AI incident database especially vital for critical infrastructure plus responsible scaling in 5G/6G for fraud detection and analytics.

The session wrapped as a timely reminder, AI can supercharge telecom, but only if trust, collaboration between regulators, industry, and tech players, and balanced governance keep pace. In a country where networks touch billions daily, getting this right isn’t optional, it’s the line between seamless connectivity and digital chaos.

I&B Ministry

Govt extends TRP suspension for news channels by four weeks amid concerns

I&B ministry cites sensationalism fears linked to West Asia conflict coverage

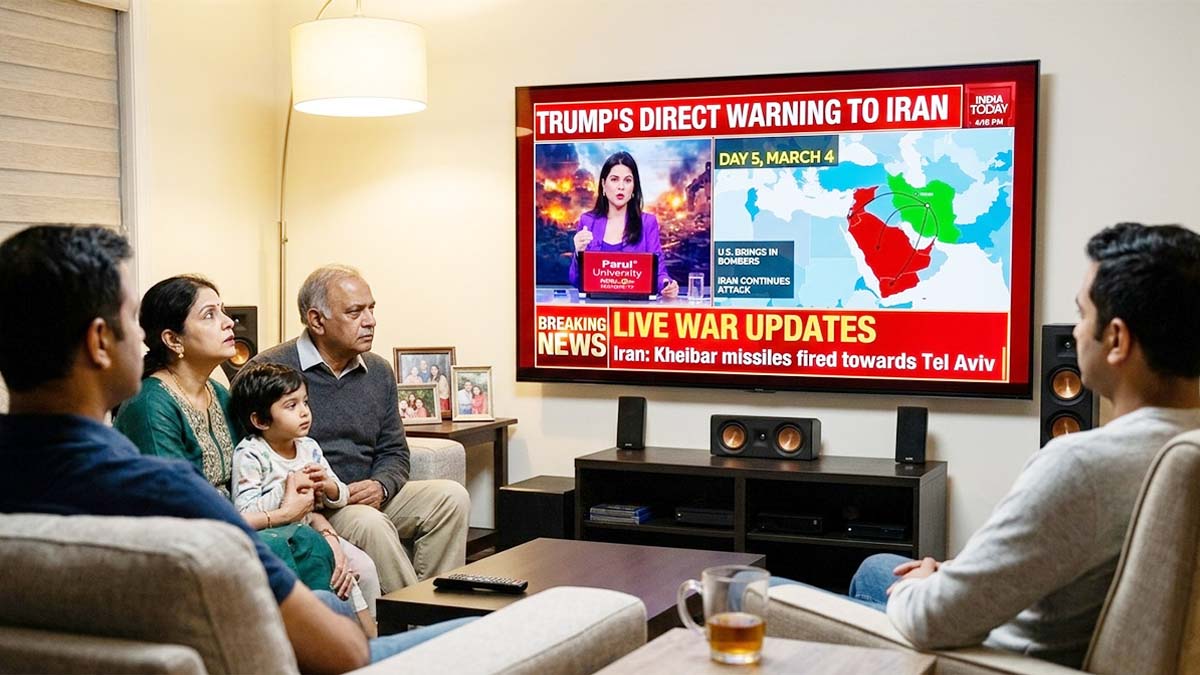

NEW DELHI: The Ministry of Information and Broadcasting has extended the suspension of Television Rating Points for news channels by another four weeks, keeping the industry in a ratings blackout for a longer stretch.

In an order dated March 31, the ministry directed the Broadcast Audience Research Council to continue withholding TRP data “for a further period of four weeks or until further directions, whichever is earlier.” This marks the second such directive after an initial four-week pause was imposed on March 6.

The government said the extension is aimed at curbing unwarranted sensationalism and speculative reporting, particularly in the context of the ongoing tensions in West Asia. It noted that the conflict continues to evolve and could trigger anxiety among viewers, especially those with personal or economic ties to the region.

TRPs serve as the primary yardstick for measuring television viewership and play a crucial role in shaping advertising revenues and competitive positioning among news broadcasters. Their absence effectively removes a key performance benchmark, forcing channels to operate without publicly available ratings.

The directive applies specifically to news television channels and has been issued under the government’s regulatory powers in the interest of public order. While the move is framed as a temporary measure, its continuation suggests ongoing concerns about the tone and nature of coverage.

For broadcasters, the extended blackout means navigating a high-stakes news cycle without the usual scoreboard. Whether it tempers the noise or simply shifts the battle elsewhere remains to be seen, but for now, the ratings race is officially on pause.