eNews

Google takes down 1.7 bn. ads for violating policies

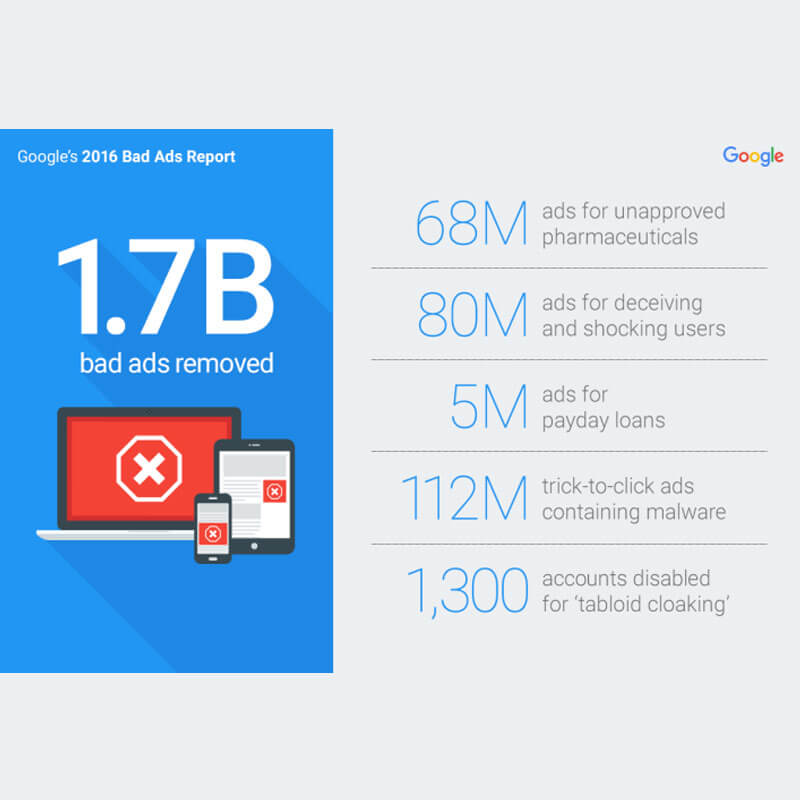

MUMBAI: In 2016, Google took down 1.7 billion ads that violated its advertising policies, more than double the amount of bad ads it took down in 2015, according to the latest ‘Better Ads Report’ for 2016 released by the company.

“A free and open web is a vital resource for people and businesses around the world. And ads play a key role in ensuring you have access to accurate, quality information online. But bad ads can ruin the online experience for everyone. They promote illegal products and unrealistic offers. They can trick people into sharing personal information and infect devices with harmful software. Ultimately, bad ads pose a threat to users, Google’s partners, and the sustainability of the open web itself,” said Sustainable Ads Product Management director Scott Spencer.

Last year, Google did two key things to take down more bad ads. First, it expanded the company’s policies to better protect users from misleading and making predatory offers. For example, in July it introduced a policy to ban ads for payday loans, which often result in unaffordable payments and high default rates for users. In the six months since launching this policy, Google disabled more than five million payday loan ads.

Second, it beefed up its technology to spot and disable bad ads even faster. For example, “trick to click” ads often appear as system warnings to deceive users into clicking on them, not realizing they are often downloading harmful software or malware. In 2016, Google detected and disabled a total of 112 million ads for “trick to click,” 6X more than in 2015.

According to the report, most common inappropriate online ads were those for illegal products. Google disabled more than 68 million bad ads for healthcare violations and 17 million ads for illegal gambling violations in 2016.

Protecting consumers against misleading ads that try to drive clicks and views by intentionally misleading people with false information like asking `Are you at risk for this rare, skin-eating disease?’ or offering miracle cures like a pill that will help people lose 50 pounds in three days without lifting a finger, Google took down nearly 80 million bad ads for deceiving, misleading and shocking users in 2016.

As for ads developed exclusively for the mobile web, Google’s systems detected and disabled over 23,000 ‘self-clicking ads’ on its platforms as compared to only having to disable a few thousand of these bad ads last year. Similarly, the report highlighted a dramatic increase in scamming activity in 2016 and approximately 7 million bad ads were disabled for intentionally attempting to trick the Google detection systems.

2016 also saw rise of a new type of scammers called `tabloid cloakers’ that take advantage of current trends and hot topics: a government election or a trending news story or a well-known celebrity. The ads used by these scammers may look like headlines for real articles on a news website but when clicked upon, consumers are redirected to a site selling weight loss products. In 2016, Google suspended over 1,300 accounts for `tabloid cloaking’. In December alone, Google took down 22 `cloakers’ that were responsible for ads seen over 20 million times by people online in a single week.

Over the years, Google has been working to find ads that violate its policies and blocks the ad or the advertiser, depending on the violation. In 2016, it took action on 47,000 sites for promoting content and products related to weight-loss scams. It also took action on more than 15,000 sites for unwanted software and disabled 900,000 ads for containing malware. Around 6,000 sites and 6,000 accounts were suspended for attempting to advertise counterfeit goods, like imitation designer watches.

In order to keep Google’s content and search networks safe and clean, Google has introduced stricter policies, including the new AdSense mis-representative content policy. The policy update introduced in November 2016, enables the company to take action against website owners misrepresenting who they were and deceiving users with their content.

eNews

Paisabazaar launches Credit Premier League 2.0

Nationwide campaign rewards highest credit scores with Rs 1 lakh top prize.

MUMBAI: When credit scores become a national league, even your CIBIL report starts feeling like it’s playing in the IPL and Paisabazaar has just kicked off the second season. Paisabazaar, India’s leading marketplace for financial products and the country’s largest free credit score platform, has announced the return of the Credit Premier League (CPL) 2.0, a fun, nationwide initiative to recognise and reward individuals with the highest credit scores.

Building on the success of the first edition, CPL 2.0 introduces higher rewards and broader participation. The individual(s) with the highest credit score in the country will win Rs 1 lakh, while state champions will each receive Rs 10,000. Additionally, all participants from the winning state, the one with the highest average credit score will also be rewarded.

All winnings will be credited directly to winners’ PB Wallet, allowing them to pay credit card bills, recharge mobiles, or settle utility bills seamlessly on the Paisabazaar platform.

Paisabazaar CEO Santosh Agarwal said the campaign aims to make credit awareness more engaging and mainstream. “With CPL, we are bringing together engagement, gamification and rewards to make conversations around credit scores more mainstream,” he noted. “Our focus remains on building a financially aware and credit-healthy Bharat.”

The first edition of CPL saw over 5.5 million participants, with the highest individual score touching 861. Delhi recorded the highest average credit score of 746.

Consumers can participate simply by checking their free credit score on the Paisabazaar platform or app. The CPL leaderboard and rankings will be available exclusively on the Paisabazaar App.

In a country where financial dreams are serious business, Paisabazaar has found a smart way to turn credit scores into an exciting game – because when your financial health gets rewarded, everyone wants to play.