Digital

Click-bait and switch: AI fraud spins a new web around digital advertising

MUMBAI: Click, scroll… fooled again? In a plot twist worthy of a digital thriller, mFilterIt’s Ad Fraud Intelligence Report 2025 reveals that the world of online advertising is being quietly hijacked by a new kind of impostor: AI-shaped fraud that looks, moves, and behaves uncannily like real users, slipping past traditional defences with a confidence that would impress even the boldest scammer. What once looked like a technical hiccup now emerges as a full-blown trust crisis.

The report, based on billions of validated data points across platforms, shows the scale of the upheaval. Fraud sophistication has tripled in just two years, creating an ecosystem where even “clean” traffic can no longer be taken at face value. Programmatic campaigns, for instance, saw between 30 and 45 per cent of supposedly valid traffic fail deeper checks. Walled Gardens, long considered the industry’s gated sanctuaries, showed 9 to 18 per cent of activity with signs of behavioural manipulation, a figure that becomes even more damaging because these environments run on premium CPMs and CPCs. Meanwhile, affiliate networks remain a messier battlefield, contributing 43 per cent of the invalid traffic detected, often through lead punching, organic hijacking, duplicated events, and inorganic installs masquerading as high-intent users.

Even the old comfort of “viewability” has now become little more than a technical nicety. The report dismantles the myth that viewable impressions are genuinely seen by humans. AI-driven bots, operating across multiple channels, now mimic real browsing behaviour so convincingly that they complete scroll gestures, replicate dwell times, and interact with content at human-like intervals. The result is a flood of impressions that are technically viewable, yet deliver zero actual human attention. Ads routinely appear in environments such as MFA (Made-for-Advertising) sites that stack or stuff multiple placements, pass viewability benchmarks with ease, and still offer no meaningful exposure. Across audits, mFilterIt found numerous cases where ads achieved perfect viewability scores while human engagement was non-existent.

Brand safety, often treated as a solved problem, also emerges as a façade. Legacy systems built on keyword filtering, English-first logic, and surface-level metadata are now woefully inadequate in a digital world dominated by visuals, reels, thumbnails, regional dialects, and cultural nuance. The report documents misclassified content across YouTube, OTT, and UGC platforms, where ads meant for general audiences ended up beside gambling pages, emotionally charged vernacular videos, or unsuitable made-for-kids content. In fact, 7 to 9 per cent of YouTube impressions in analysed campaigns appeared on children’s content, a direct waste of money and a massive mismatch in targeting relevance. Visual-first formats repeatedly slipped past keyword filters, and regional languages across India and the Middle East were routinely misunderstood or entirely misread by traditional tools.

Frequency capping, another long-standing comfort blanket of advertisers, fares no better. The belief that setting a cap guarantees controlled exposure simply doesn’t hold. The report shows that 15 to 20 per cent of CTV and OTT impressions violated their assigned caps, often showing users the same ad eight to twelve times despite a supposed ceiling of three. Because platforms apply frequency as an average rather than a maximum, some users barely see ads while others are bombarded. The fragmentation of user identities across devices, spoofed IDs, and reseller delivery paths makes these violations nearly invisible. The outcome is predictable: irritated audiences, declining attention, limited reach, and skewed optimisation.

App ecosystems, once thought to be the cleanest segment of the funnel, reveal their own cracks. Attribution platforms report “clean installs”, but fail to validate whether the user behind the install is real. According to mFilterIt, between 45 and 55 per cent of installs in some campaigns displayed anomalies such as device duplication, automated install farms, spoofed sessions, or unnatural click-to-install times engineered to hijack organic users. In one case, a petroleum client discovered that 21 per cent of its “clean” installs were actually referral coupon abuse, draining budgets without adding a single meaningful user.

Affiliate and performance-driven ecosystems continue to attract sophisticated manipulation. One automobile brand found that 70 per cent of invalid events were generated by a single affiliate partner through punched leads. Across multiple campaigns, mFilterIt observed up to 35 per cent of affiliate traffic showing inorganic patterns, robotic form fills, or action-driven manipulation that made conversion metrics look exceptional, even as actual business outcomes declined. High conversion rates, often treated as a badge of campaign health, are shown to be just as vulnerable; 30 to 35 per cent of in-app events in some fintech and crypto campaigns were fraudulent despite “strong” reported CVRs.

Influencer ecosystems do not escape scrutiny either. The report reveals that follower counts and engagement rates, the industry’s favourite shorthand metrics, hide vast chasms in audience quality. Some influencers analysed had fewer than 20 per cent suspicious followers, while others crossed the astonishing threshold of 90 to 100 per cent, raising questions about inorganic growth, bot-based engagement, and artificially inflated sentiment. Without authenticity checks, brands risk paying for reach that never actually reaches anyone.

Retargeting is another quiet casualty. Since bots, spoofed devices, and incent-driven users generate actions that drop cookies or identifiers, remarketing lists become contaminated by non-human audiences. Engagement partners that fire phantom clicks often hijack organic traffic or register sessions immediately after an install. In one case from a quick-commerce platform, random background clicks attempted to claim organic conversions, distorting the entire optimisation pathway. Retargeting then becomes an exercise in chasing ghosts — audiences that look warm on paper but cannot convert because they never existed.

All this is unfolding against an expanding global digital ad market projected to reach $678.7 billion in 2025, representing 68.4 per cent of all advertising. Retail media, growing at 13.9 per cent, social at 9.2 per cent, programmatic at 8.4 per cent, and CTV/OTT at 10.9 per cent, offer abundant opportunities, and equally abundant chances for AI-led fraud to seep in unnoticed.

The report ultimately reframes the issue: this is no longer a traffic problem but a trust problem. As CEO Amit Relan puts it, “The real risk in digital advertising is not fraud itself, but the illusion of clean data.” CTO Dhiraj Gupta echoes the urgency, noting that fraud now mirrors human behaviour with such fidelity that rule-based systems stand no chance. Traditional metrics: viewability, clicks, CTR, and installs, have lost their authority. The industry’s next frontier lies in full-funnel validation, multi-signal intelligence, contextual understanding, and attention-led measurement rather than surface-level exposure.

mFilterIt calls for advertisers to move away from fragmented verification and towards systems that connect impression integrity, contextual safety, behavioural authenticity, and conversion truth. In an AI-accelerated landscape where every stage of the funnel can be distorted, digital trust is no longer a nice-to-have, it is the new measure of performance. If the advertising world once asked “Where did my ad run?”, the new question might well be “Did any of it reach a real human at all?”

Digital

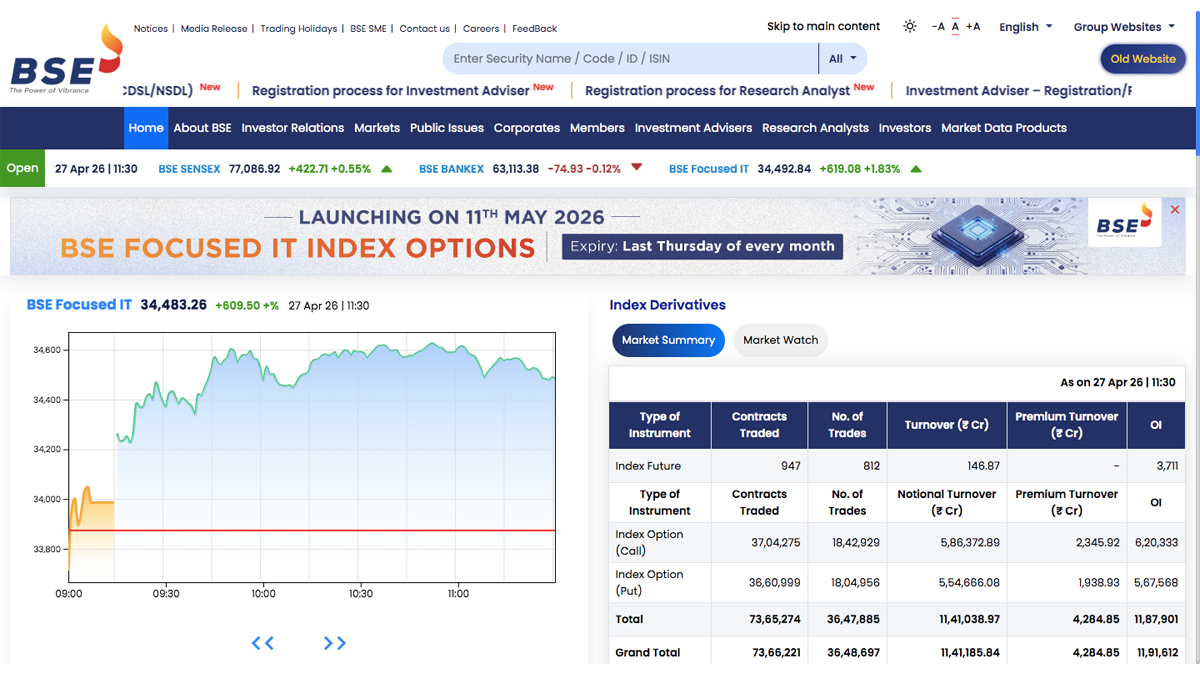

BSE revamps website with real-time data, mobile-first design, smart search

New platform brings cleaner layout, live market trackers and easier navigation

MUMBAI: BSE has rolled out a major redesign of its official website, aiming to make market data faster to access and easier to navigate for both seasoned traders and new-age retail investors.

The updated platform introduces a cleaner, more modern interface, replacing the earlier dense and text-heavy layout with a streamlined design. Navigation has been simplified with clearly segmented menus across markets, corporates, public issues, members, investment advisers and research analysts, helping users find information without the usual maze of links.

At the top, a refreshed header now offers quick access to notices, media releases, trading holidays and career updates. A centralised search bar allows users to instantly locate securities using names, codes, IDs or ISINs, cutting down the time spent digging through pages. For those still attached to the old layout, a dedicated toggle lets users switch back during the transition period.

A key highlight of the revamp is the sharper focus on real-time market data. A live ticker band now runs across the site, offering updates on indices including the SENSEX and BANKEX, alongside pre-open market signals. The homepage also features interactive charts, giving users a quick visual read of market trends without needing to navigate deeper.

Market activity sections such as top gainers, losers, turnover stocks and block deals have been reorganised into tabbed formats, making them more intuitive and easier to scan. Meanwhile, specialised areas like index derivatives and corporate data have been upgraded with better visualisation tools, offering clearer insights into contracts, turnover, open interest and company fundamentals.

The overhaul also reflects a strong mobile-first approach. With a growing number of investors tracking markets on their phones, the new site is fully responsive, ensuring charts and data tables remain readable and interactive across devices.

With this redesign, BSE appears to be aligning its digital presence with the needs of a more tech-savvy investor base, where speed, clarity and usability are just as critical as the data itself.